The Great Credential Caper: Credential Stuffing and AI - Dan Hollinger & Christo | BSidesSF 2026

Dan Hollinger (Product Leader) & Christo (Cloudflare) – 41 min, intermediate

An exploration of how agentic AI and automated tools have made credential stuffing and CAPTCHA solving easier than ever, and how defenders must shift to evaluating authenticity rather than just automation.

The Scale of Credential Stuffing

16 billion passwords have been leaked globally. Attackers use these exposed credentials in automated credential stuffing attacks to take over accounts and sell them on the dark web.

- 41% of logins use previously exposed credentials.

- During Black Friday week, 95% of logins using compromised passwords were automated.

- Attackers use automated botnets with residential mobile proxy IPs to bypass rate limiting.

Not only are people reusing usernames and passwords and they’re getting exposed in data breaches, but then bots are firing them up and using these left and right to just try and get into different accounts.

All it takes is one breach for these legitimate credentials to be found by malicious actors.

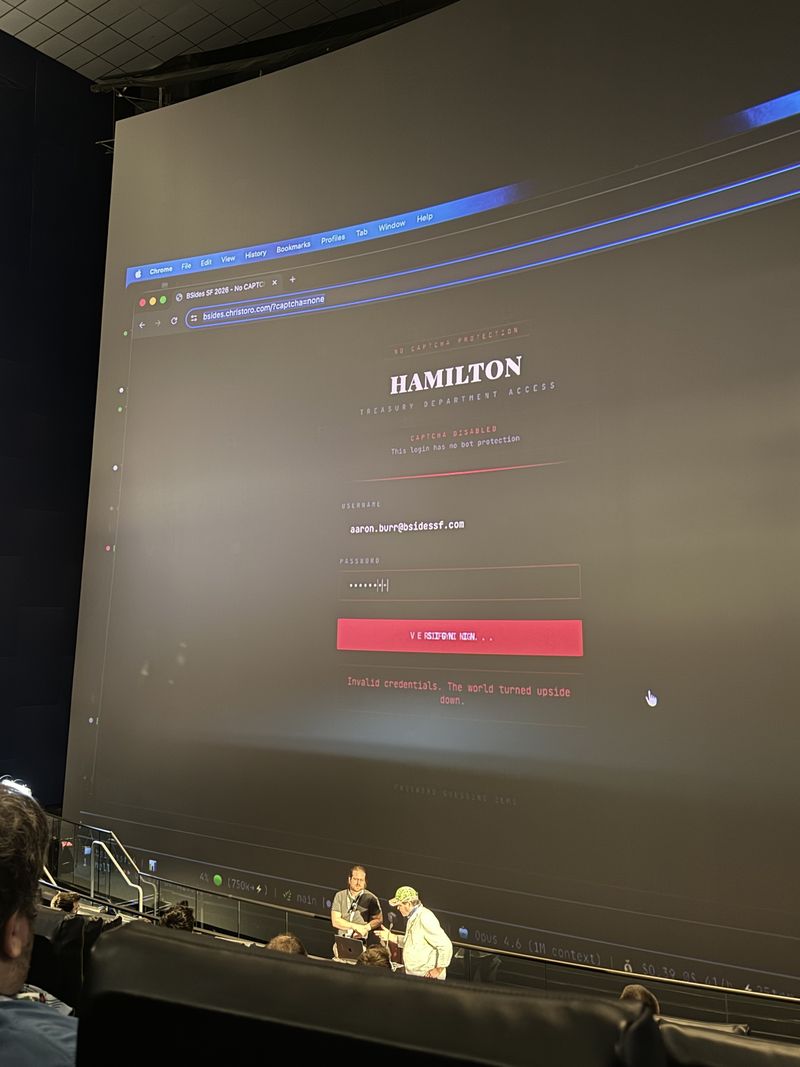

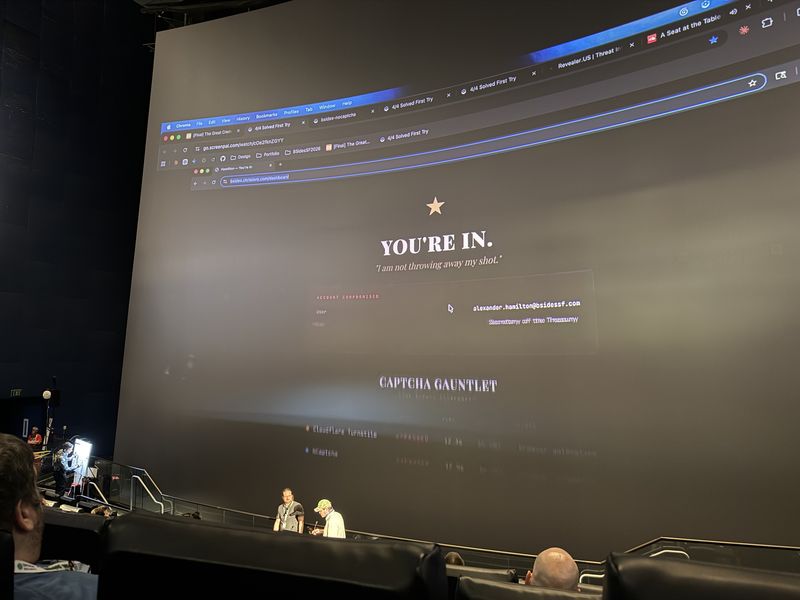

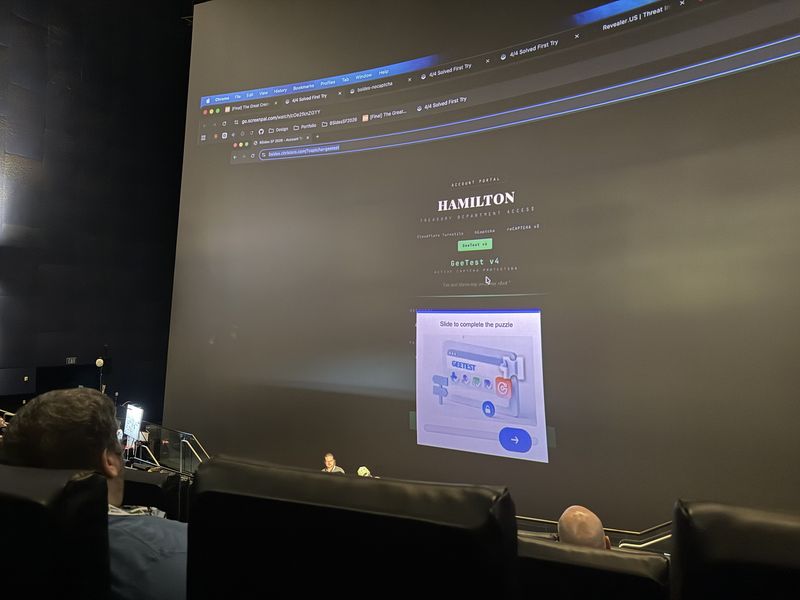

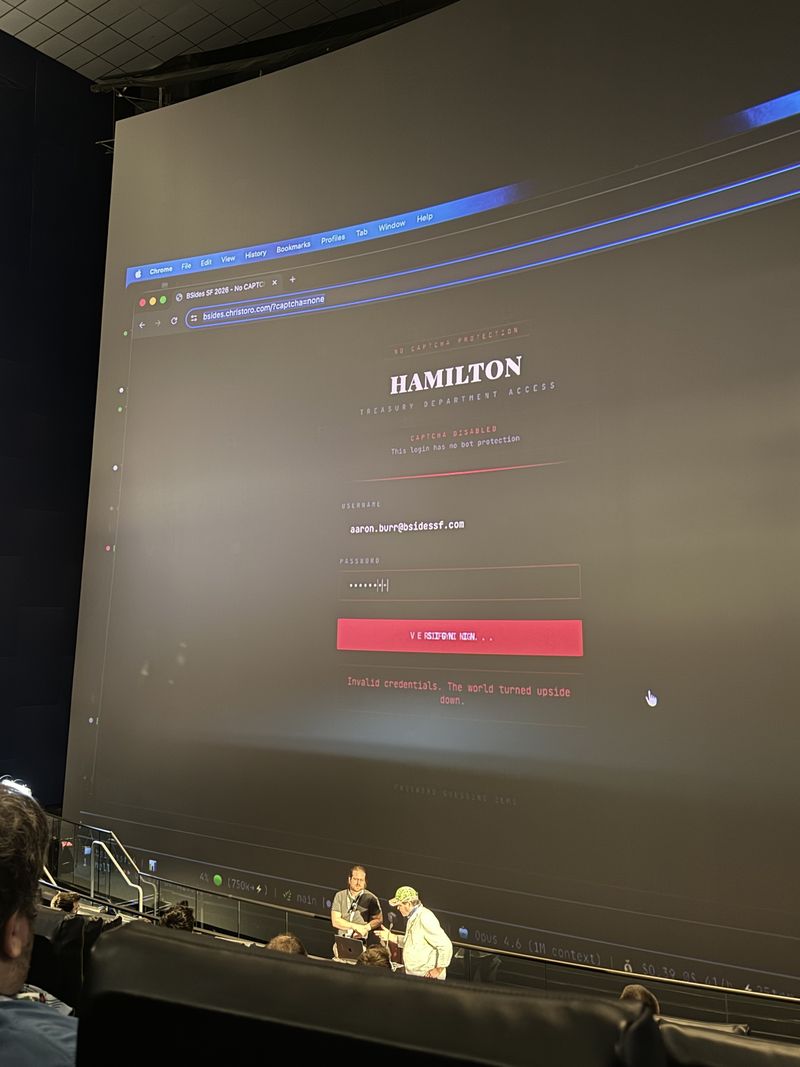

AI-Powered Attack Automation and CAPTCHA Solving

Live demos demonstrated how AI agents like Claude Code, combined with tools like Playwright and Scrapling, can easily bypass traditional bot defenses. The AI can autonomously solve various CAPTCHAs and mimic human behavior to evade detection.

- Claude Code can use Playwright to act like a human and bypass bot scoring mechanisms.

- AI agents can autonomously solve Cloudflare Turnstile, hCaptcha, reCAPTCHA v2, and GeeTest.

- The REPL loop allows AI to iteratively figure out how to bypass defenses through trial and error.

- Traditional bot scores are losing efficacy because AI can easily mimic authentic human behavior.

Now all of a sudden these bot scores… it doesn’t have the same power that it used to have now that AI can solve this stuff so much easier.

I think we’re going to need to invent proof of love.

The Parfait Model: Layered Defense

Defending against modern credential stuffing requires a layered approach across four domains. The focus must shift from merely detecting automation to verifying the authenticity of the agent or user.

- Password Layer: Reduce password supply via passkeys, password managers, and SSO.

- Request Layer: Detect automated traffic, slow down exfiltration, and disrupt attacker infrastructure.

- Account Layer: Analyze post-login behavior for anomalies and step up MFA when necessary.

- Agent Layer: Establish trust and authorization for legitimate AI agents acting on behalf of users.

Defenses should have layers. Ultimately any kind of defense you’re looking for against bot automation and authenticity should be looking across these four layers.

The name of the game is to try and figure out not what’s automated but what’s authentic.

Q&A Highlights

Can you differentiate AI bots from humans in a WAF? It’s difficult because AI mimics human behavior, but defenders can look at past behavior, user agents combined with ASN numbers, and TLS/SSL handshakes (JA3/JA4 fingerprints).

Is MFA a solution? MFA is part of the solution but adds friction and conflicts with the use case of wanting legitimate AI agents to act autonomously on your behalf.

Sequence analytics? Tracking how a user navigates a site or matching keystroke and mouse patterns can help determine if behavior is authentic.

Technologies

Have I Been Pwned, Claude Code, Playwright, Cloudflare Turnstile, hCaptcha, reCAPTCHA v2/v3, GeeTest, Scrapling, YubiKey, Google Gemini Flash

Frequently Asked Questions

Can AI agents solve CAPTCHAs like Cloudflare Turnstile and reCAPTCHA?

Yes. AI agents like Claude Code combined with browser automation tools can autonomously solve Cloudflare Turnstile, hCaptcha, reCAPTCHA v2, and GeeTest by mimicking human behavior, making traditional bot scores far less effective.

What is the Parfait model for defending against credential stuffing?

The Parfait model is a layered defense across four domains: the password layer (passkeys, SSO), the request layer (detecting automated traffic), the account layer (post-login behavior analysis), and the agent layer (establishing trust for legitimate AI agents).

How many leaked passwords are used in credential stuffing attacks?

Over 16 billion passwords have been leaked globally, with 41% of logins using previously exposed credentials. During Black Friday week, 95% of logins using compromised passwords were automated bot attacks.